Research Group

Computational Cognitive Science Group

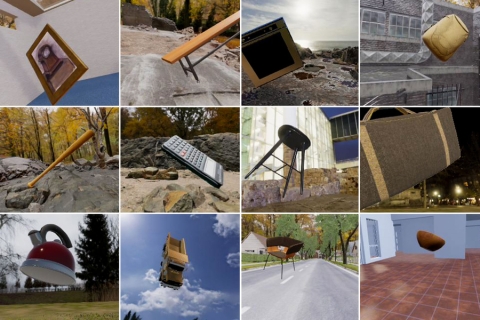

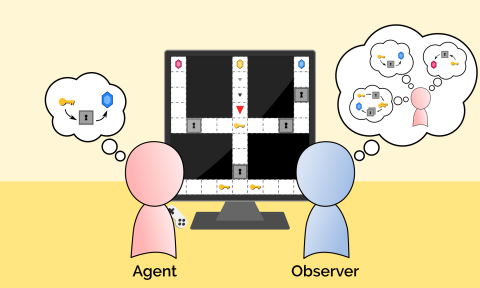

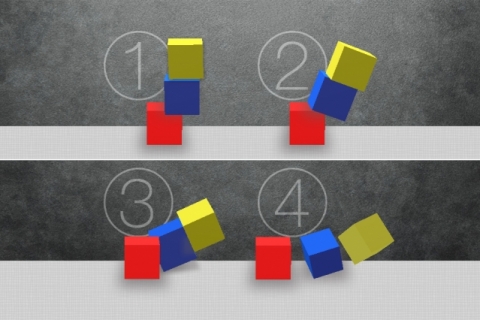

Through a combination of mathematical modeling, computer simulation, and behavioral experiments, we look to uncover the logic behind the inductive leaps humans make every day. This includes constructing perceptual representations, separating “style” and “content” in perception, learning concepts and words, judging similarity or representativeness, inferring causal connections, noticing coincidences and predicting the future. We approach these topics through behavioral testing of both humans and machines. We also use more traditional tools drawn from Bayesian statistics, probability theory, geometry, graph theory, and linear algebra. Our work is driven by the complementary goals of trying to achieve a better understanding of human learning and trying to build systems that come closer to the capacities of human learners. While our core interests are in human learning and reasoning, we also work actively in machine learning and artificial intelligence. The two topics are inseparable: bringing machine-learning algorithms closer to the capacities of human learning should lead to more powerful AI systems as well as more powerful theoretical paradigms for understanding human cognition. Our current research allows us to construct intuitive theories of core domains, such as intuitive physics, psychology, biology, or social structure.

Related Links

Contact us

If you would like to contact us about our work, please refer to our members below and reach out to one of the group leads directly.

Last updated Aug 23 '17