PI

Joshua Tenenbaum

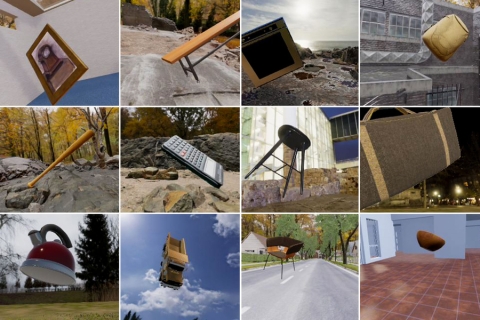

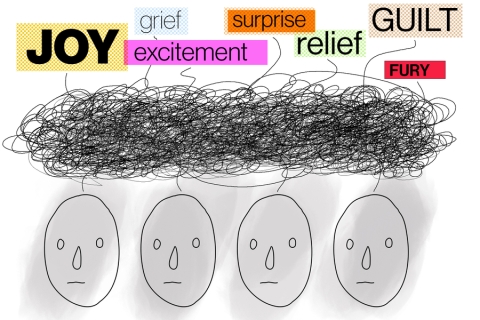

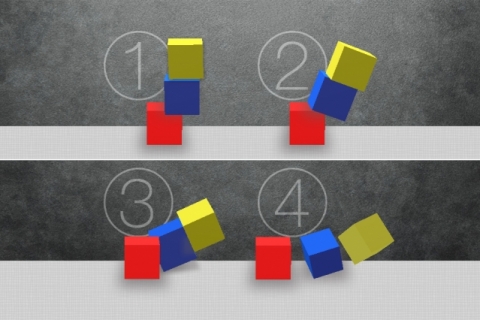

I study the computational basis of human learning and inference. Through a combination of mathematical modeling, computer simulation, and behavioral experiments, I try to uncover the logic behind our everyday inductive leaps: constructing perceptual representations, separating "style" and "content" in perception, learning concepts and words, judging similarity or representativeness, inferring causal connections, noticing coincidences, predicting the future. I approach these topics with a range of empirical methods -- primarily, behavioral testing of adults, children, and machines -- and formal tools -- drawn chiefly from Bayesian statistics and probability theory, but also from geometry, graph theory, and linear algebra. My work is driven by the complementary goals of trying to achieve a better understanding of human learning in computational terms and trying to build computational systems that come closer to the capacities of human learners.

Josh Tenenbaum is Professor of Computational Cognitive Science in the Department of Brain and Cognitive Sciences at MIT, a principal investigator at MIT's Computer Science and Artificial Intelligence Laboratory (CSAIL), and a thrust leader in the Center for Brains, Minds and Machines (CBMM). His research centers on perception, learning, and common-sense reasoning in humans and machines, with the twin goals of better understanding human intelligence in computational terms and building more human-like intelligence in machines. The machine learning and artificial intelligence algorithms developed by his group are currently used by hundreds of other science and engineering groups around the world.

Related Links

Last updated Nov 01 '22