PI

Core/Dual

Ted Adelson

Imagine a robot that can make a peanut butter and jelly sandwich with human-level skill. It slides its hand into the bag and skips over the end of the loaf to find two good slices, which it gently extracts. Then the robot uses a knife to extract a blob of peanut butter from the jar and spreads it over the bread. It does the same with the jelly, understanding that jelly looks and behaves much differently than peanut butter.

What seems a simple task for us is much harder for a robot. This task depends critically on the visual and mechanical properties of materials. The bread is a delicate solid; and the peanut butter and the jelly are both semi-solids, each with a distinct set of optical and mechanical properties.

Vision starts with the eyes and touch starts with the skin, but in both cases, the most important work is done in the brain, where the raw signals are transformed into a meaningful model of the scene. That model lets a person interact with the world. Professor Edward (Ted) Adelson of MIT CSAIL has a background in human and computer vision, but has recently been studying the problems of touch and manipulation. Building sensitive robot fingers to support skilled manipulation was a natural progression of his research.

Prof. Adelson leads the Perceptual Science Group in CSAIL and is part of both the Visual Computing and the Embodied Intelligence Communities of Research. He is the John and Dorothy Wilson Professor of Vision Science at MIT, in the Department of Brain and Cognitive Science, and is a member of CSAIL. He is also a member of the National Academy of Sciences, a Life Fellow of the IEEE, and a recipient of the Ken Nakayama Medal in Vision Science. His contributions to multiscale image representation and motion perception have won IEEE Computer Society awards, and he has done pioneering work on the problems of material perception. He introduced the plenoptic function and built the first plenoptic camera. He has also produced some well-known illusions such as the Checker-Shadow Illusion.

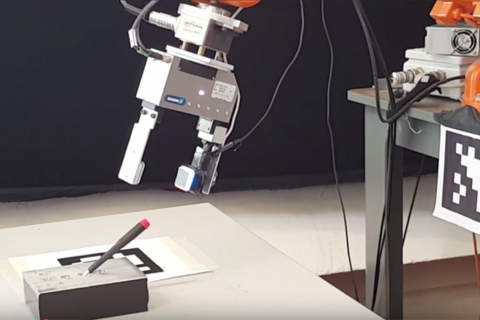

His lab is currently developing soft, sensitive touch sensors that can match or exceed the sensory capabilities of human skin. Prof. Adelson would like to give robots the capabilities that human fingers support, including the ability to skillfully manipulate objects. His robot fingers use a novel technology called GelSight, in which tiny cameras are placed inside soft robotic fingers to enable robots to sense the world around them.

GelSight converts mechanical deformation into image data. The images are processed to infer surface properties (shape, hardness, and friction) as well as mechanical interaction (force, shear, and slip). GelSight can measure 3D surface geometry at the micron scale, and because the signal is inherently geometric, it allows the robot to calculate the pose of the object it is grasping. With these sensors, the researchers can measure tangential force and displacement and assess frictional interactions as well as the failure mode of slip — which is essential to successful manipulation.

These strides in robotic touch, when combined with AI and deep learning, are bringing us closer to a world in which robots are capable of exploiting touch to assist humans with everyday tasks. For a robot to truly use this sense, it needs a hand and a brain, and it needs to coordinate them in real-world tasks. Prof. Adelson’s lab collaborates with other labs that have expertise in AI, haptics, and robotics; the result is an interdisciplinary research program to advance the state-of-the-art in robot manipulation.

Related Links

Last updated Feb 03 '22