When you see headlines about artificial intelligence (AI) being used to detect health issues, that’s usually thanks to a hospital providing data to researchers. But such systems aren’t as robust as they could be, because such data is usually only taken from one organization.

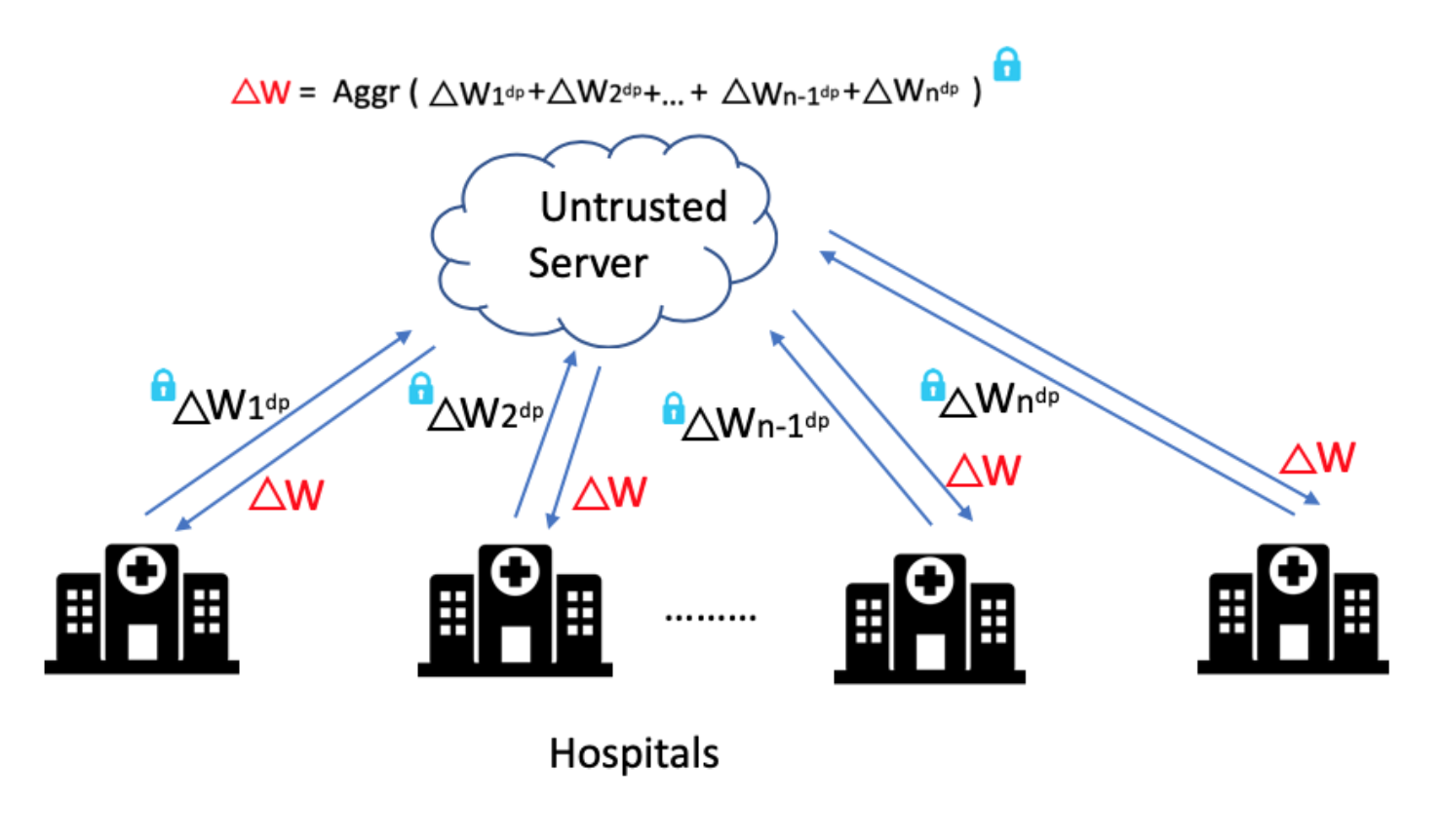

Hospitals are understandably cautious about sharing data in a way that could get it leaked to competitors. Existing efforts to handle this issue include “federated learning” (FL) a technique that enables distributed clients to collaboratively learn a shared machine learning model while keeping their training data localized.

However, even the most cutting-edge FL methods have privacy concerns, since it’s possible to leak information about datasets using the trained model's parameters or weights. Guaranteeing privacy in these circumstances generally requires skilled programmers to take significant time to tweak parameters - which isn’t practical for most organizations.

A team from MIT CSAIL thinks that medical organizations and others would benefit from their new system PrivacyFL, which serves as a real-world simulator for secure, privacy-preserving FL. Its key features include latency simulation, robustness to client departure, support for both centralized and decentralized learning, and configurable privacy and security mechanisms based on differential privacy and secure multiparty computation.

MIT principal research scientist Lalana Kagal says that simulators are essential for federated learning environments for several reasons.

- To evaluate accuracy. SKagal says such a system “should be able to simulate federated models and compare their accuracy with local models.”

- To evaluate total time taken. Communication between distant clients can become expensive. Simulations are useful for evaluating if client-client and client-server communications are beneficial.

- To evaluate approximate bounds on convergence and time taken for convergence.

- To simulate real-time dropouts. With PrivacyFL clients may drop out at any time.

Using the lessons learned with this simulator, the team iswe are in the process of developing an end-to-end federated learning system that can be used in real world scenarios For example, such a system could be used by collaborating hospitals to train privacy-preserving robust models to predict complex diseases.

Research assistant Vaikkunth Mugunthan was the lead on theis paper, which that also included undergraduate Anton Peraire-Bueno. The teamy presented it virtually this week at the ACM International Conference on Information and Knowledge Management (CIKM).