Cameras and computers together can conquer some seriously stunning feats. Giving computers vision has helped us fight wildfires in California, understand complex and treacherous roads — and even see around corners.

Specifically, seven years ago a group of MIT researchers created a new imaging system that used floors, doors, and walls as “mirrors” to understand information about scenes outside a normal line of sight. Using special lasers to produce recognizable 3D images, the work opened up a realm of possibilities in letting us better understand what we can’t see.

Recently, a different group of scientists from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) has built off of this work, but this time with no special equipment needed: They developed a method that can reconstruct hidden video from just the subtle shadows and reflections on an observed pile of clutter. This means that, with a video camera turned on in a room, they can reconstruct a video of an unseen corner of the room, even if it falls outside the camera's field of view.

By observing the interplay of shadow and geometry in video, the team’s algorithm predicts the way that light travels in a scene, which is known as “light transport.” The system then uses that to estimate the hidden video from the observed shadows — and it can even construct the silhouette of a live-action performance.

This type of image reconstruction could one day benefit many facets of society: Self-driving cars could better understand what’s emerging from behind corners, elder-care centers could enhance safety for their residents, and search-and-rescue teams could even improve their ability to navigate dangerous or obstructed areas.

The technique, which is “passive,” meaning there are no lasers or other interventions to the scene, still currently takes about two hours to process, but the researchers say it could eventually be helpful in reconstructing scenes not in the traditional line of sight for the aforementioned applications.

“You can achieve quite a bit with non-line-of-sight imaging equipment like lasers, but in our approach you only have access to the light that's naturally reaching the camera, and you try to make the most out of the scarce information in it,” says Miika Aittala, former CSAIL postdoc and current research scientist at NVIDIA, and the lead researcher on the new technique. “Given the recent advances in neural networks, this seemed like a great time to visit some challenges that, in this space, were considered largely unapproachable before.”

To capture this unseen information, the team uses subtle, indirect lighting cues, such as shadows and highlights from the clutter in the observed area.

In a way, a pile of clutter behaves somewhat like a pinhole camera, similar to something you might build in an elementary school science class: It blocks some light rays, but allows others to pass through, and these paint an image of the surroundings wherever they hit. But where a pinhole camera is designed to let through just the amount of right rays to form a readable picture, a general pile of clutter produces an image that is scrambled (by the light transport) beyond recognition, into a complex play of shadows and shading.

You can think of the clutter, then, as a mirror that gives you a scrambled view into the surroundings around it — for example, behind a corner where you can’t see directly.

The challenge addressed by the team's algorithm was to unscramble and make sense of these lighting cues. Specifically, the goal was to recover a human-readable video of the activity in the hidden scene, which is a multiplication of the light transport and the hidden video.

However, unscrambling proved to be a classic “chicken-or-egg” problem. To figure out the scrambling pattern, a user would need to know the hidden video already, and vice versa.

“Mathematically, it’s like if I told you that I’m thinking of two secret numbers, and their product is 80. Can you guess what they are? Maybe 40 and 2? Or perhaps 371.8 and 0.2152? In our problem, we face a similar situation at every pixel,” says Aittala. “Almost any hidden video can be explained by a corresponding scramble, and vice versa. If we let the computer choose, it’ll just do the easy thing and give us a big pile of essentially random images that don’t look like anything.”

With that in mind, the team focused on breaking the ambiguity by specifying algorithmically that they wanted a “scrambling” pattern that corresponds to plausible real-world shadowing and shading, to uncover the hidden video that looks like it has edges and objects that move coherently.

The team also used the surprising fact that neural networks naturally prefer to express “image-like” content, even when they’ve never been trained to do so, which helped break the ambiguity. The algorithm trains two neural networks simultaneously, where they’re specialized for the one target video only, using ideas from a machine learning concept called Deep Image Prior. One network produces the scrambling pattern, and the other estimates the hidden video. The networks are rewarded when the combination of these two factors reproduce the video recorded from the clutter, driving them to explain the observations with plausible hidden data.

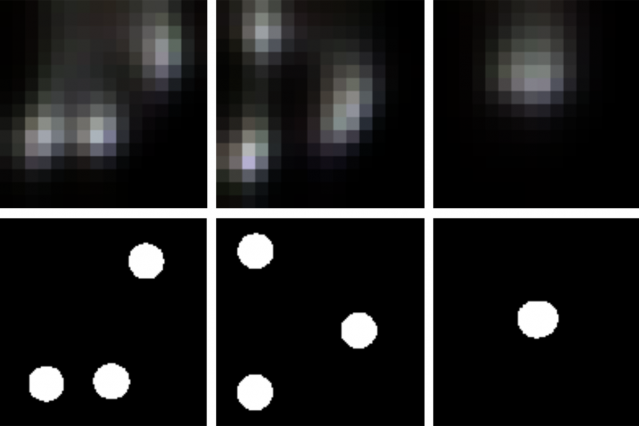

To test the system, the team first piled up objects on one wall, and either projected a video or physically moved themselves on the opposite wall. From this, they were able to reconstruct videos where you could get a general sense of what motion was taking place in the hidden area of the room.

In the future, the team hopes to improve the overall resolution of the system, and eventually test the technique in an uncontrolled environment.

Aittala wrote a new paper on the technique alongside CSAIL PhD students Prafull Sharma, Lukas Murmann, and Adam Yedidia, with MIT professors Fredo Durand, Bill Freeman, and Gregory Wornell. They will present it next week at the Conference on Neural Information Processing Systems in Vancouver, British Columbia.