For roboticists, one challenge towers above all others: generalization – the ability to create machines that can adapt to any environment or condition. Since the 1970s, the field has evolved from writing sophisticated programs to using deep learning, teaching robots to learn directly from human behavior. But a critical bottleneck remains: data quality. To improve, robots need to encounter scenarios that push the boundaries of their capabilities, operating at the edge of their mastery. This process traditionally requires human oversight, with operators carefully challenging robots to expand their abilities. As robots become more sophisticated, this hands-on approach hits a scaling problem: the demand for high-quality training data far outpaces humans' ability to provide it.

Now, a team of MIT CSAIL researchers have developed a novel approach to robot training that could significantly accelerate the deployment of adaptable, intelligent machines in real-world environments. The new system, called "LucidSim," uses recent advances in generative AI and physics simulators to create diverse and realistic virtual training environments, helping robots achieve expert-level performance in difficult tasks without any real-world data.

LucidSim combines physics simulation with generative AI models, addressing one of the most persistent challenges in robotics: transferring skills learned in simulation to the real world. “A fundamental challenge in robot learning has long been the ‘sim-to-real gap’ – the disparity between simulated training environments and the complex, unpredictable real world,” says MIT CSAIL postdoctoral associate Ge Yang, a lead researcher on LucidSim. “Previous approaches often relied on depth sensors, which simplified the problem but missed crucial real-world complexities.”

The multipronged system is a blend of different technologies. At its core, LucidSim uses large language models to generate various structured descriptions of environments. These descriptions are then transformed into images using generative models. To ensure that these images reflect real-world physics, an underlying physics simulator is used to guide the generation process.

The Birth of an Idea: From Burritos to Breakthroughs

The inspiration for LucidSim came from an unexpected place: a conversation outside Beantown Taqueria in Cambridge. ”We wanted to teach vision-equipped robots how to improve using human feedback. But then, we realized we didn't have a pure vision-based policy to begin with,” says Alan Yu, an undergraduate student at MIT and co-lead on LucidSim. "We kept talking about it as we walked down the street, and then we stopped outside the taqueria for about half an hour. That's where we had our moment."

To cook up their data, the team generated realistic images by extracting depth maps, which provide geometric information, and semantic masks, which label different parts of an image, from the simulated scene. They quickly realized, however, that with tight control on the composition of the image content, the model would produce similar images that weren’t different from each other using the same prompt. So, they devised a way to source diverse text prompts from ChatGPT.

This approach, however, only resulted in a single image. To make short, coherent videos which serve as little “experiences” for the robot, the scientists hacked together some image magic into another novel technique the team created, called “Dreams In Motion (DIM).” The system computes the movements of each pixel between frames, to warp a single generated image into a short, multi-frame video. Dreams In Motion does this by considering the 3D geometry of the scene and the relative changes in the robot’s perspective.

"We outperform domain randomization, a method developed in 2017 that applies random colors and patterns to objects in the environment, which is still considered the go-to method these days," says Yu. "While this technique generates diverse data, it lacks realism. LucidSim addresses both diversity and realism problems. It’s exciting that even without seeing the real world during training, the robot can recognize and navigate obstacles in real environments."

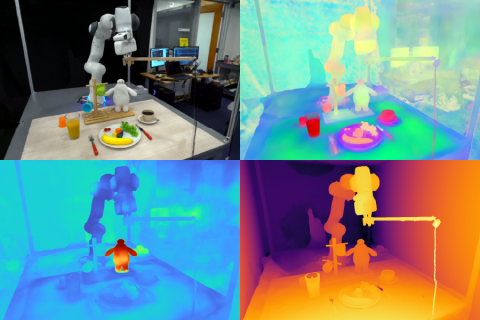

The team is particularly excited about the potential of applying LucidSim to domains outside quadruped locomotion and parkour, their main testbed. One example is mobile manipulation, where a mobile robot is tasked to handle objects in an open area, and also, color perception is critical. “Today, these robots still learn from real-world demonstrations,” says Yang. “Although collecting demonstrations is easy, scaling a real-world robot teleoperation setup to thousands of skills is challenging because a human has to physically set up each scene. We hope to make this easier, thus qualitatively more scalable, by moving data collection into a virtual environment.”

The team put LucidSim to the test against an alternative, where an expert teacher demonstrates the skill for the robot to learn from. The results were surprising: robots trained by the expert struggled, succeeding only 15 percent of the time – and even quadrupling the amount of expert training data barely moved the needle. But when robots collected their own training data through LucidSim, the story changed dramatically. Just doubling the dataset size catapulted success rates to 88 percent. "And giving our robot more data monotonically improves its performance – eventually, the student becomes the expert," says Yang.

“One of the main challenges in sim-to-real transfer for robotics is achieving visual realism in simulated environments,” says Stanford University Assistant Professor of Electrical Engineering Shuran Song, who wasn’t involved in the research. “The LucidSim framework provides an elegant solution by using generative models to create diverse, highly realistic visual data for any simulation. This work could significantly accelerate the deployment of robots trained in virtual environments to real-world tasks.”

From the streets of Cambridge to the cutting edge of robotics research, LucidSim is paving the way toward a new generation of intelligent, adaptable machines – ones that learn to navigate our complex world without ever setting foot in it.

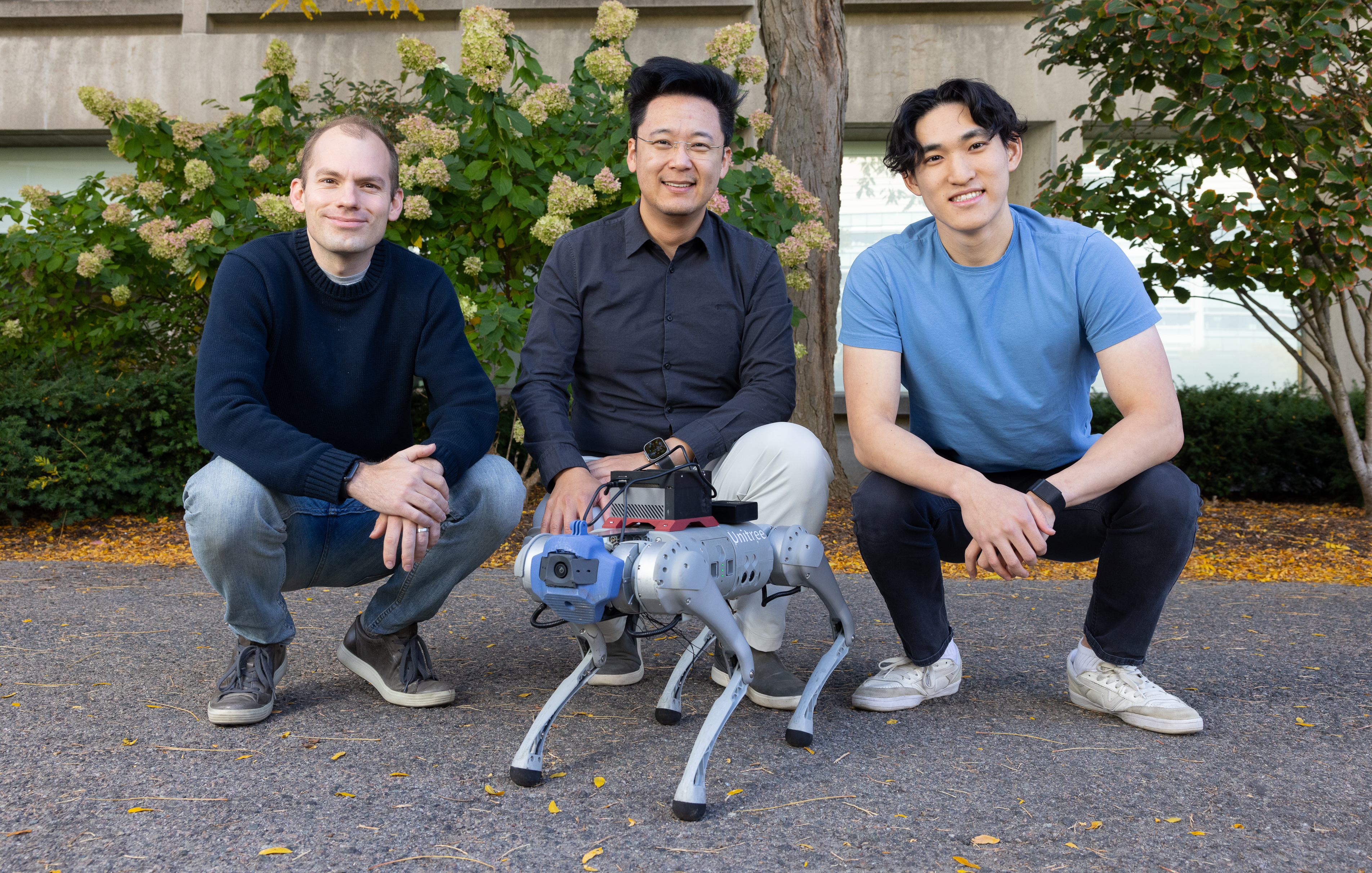

Yu and Yang wrote the paper with four fellow CSAIL affiliates: mechanical engineering postdoc Ran Choi; undergraduate researcher Yajvan Ravan; John Leonard, Samuel C. Collins Professor of Mechanical and Ocean Engineering in the MIT Department of Mechanical Engineering; and MIT Associate Professor Phillip Isola. Their work was supported, in part, by a Packard Fellowship, a Sloan Research Fellowship, the Office of Naval Research, Singapore’s Defence Science and Technology Agency, Amazon, MIT Lincoln Laboratory, and the National Science Foundation Institute for Artificial Intelligence and Fundamental Interactions. The researchers will present their work at the Conference on Robot Learning (CoRL) in early November.